What Cloud Native Applications Mean for Tech Teams in 2026

Table of Contents

Cloud native applications are reshaping how modern software gets built, deployed, and scaled. Digital teams are no longer building for stability alone; they're building for speed, flexibility, and continuous delivery. The old model of shipping large, tightly coupled systems every few months simply cannot keep up with today's pace. Instead, organizations are breaking software into smaller, modular pieces like each developed, deployed, and scaled independently.

This approach reduces risk, accelerates releases, and makes systems far easier to maintain. This guide covers what cloud native applications are, the technologies that power them, the key trends defining 2026, and the best practices your team needs to stay competitive. Many organizations partner with a reliable custom software development company to design cloud native systems aligned with long-term scalability and delivery goals.

What Are Cloud Native Applications

Cloud native applications are software systems designed from the ground up to run in cloud environments, not just hosted there, but architecturally built for it. Unlike classic software deployed on fixed servers, these applications use containers, dynamic orchestration, and decoupled services to stay resilient and portable across environments.

The evolution started when teams realized that lifting traditional monoliths into the cloud didn't unlock real benefits. True cloud native meant rethinking structure entirely, designing for failure, automation, and distributed workloads from day one.

Why They Matter in 2026

Cloud native applications deliver measurable business advantages that legacy systems simply cannot match. Independent services let teams ship features without waiting on a full release cycle, cutting time to market significantly. Resources scale with actual demand, so organizations stop paying for less operating capacity. Failures stay isolated within a single service, preventing system-wide disruptions.

Serverless computing amplifies this further by removing infrastructure management from developers entirely. In 2026, businesses adopting this approach are outpacing competitors on both delivery speed and operational efficiency.

Key Technologies Behind Cloud Native Applications

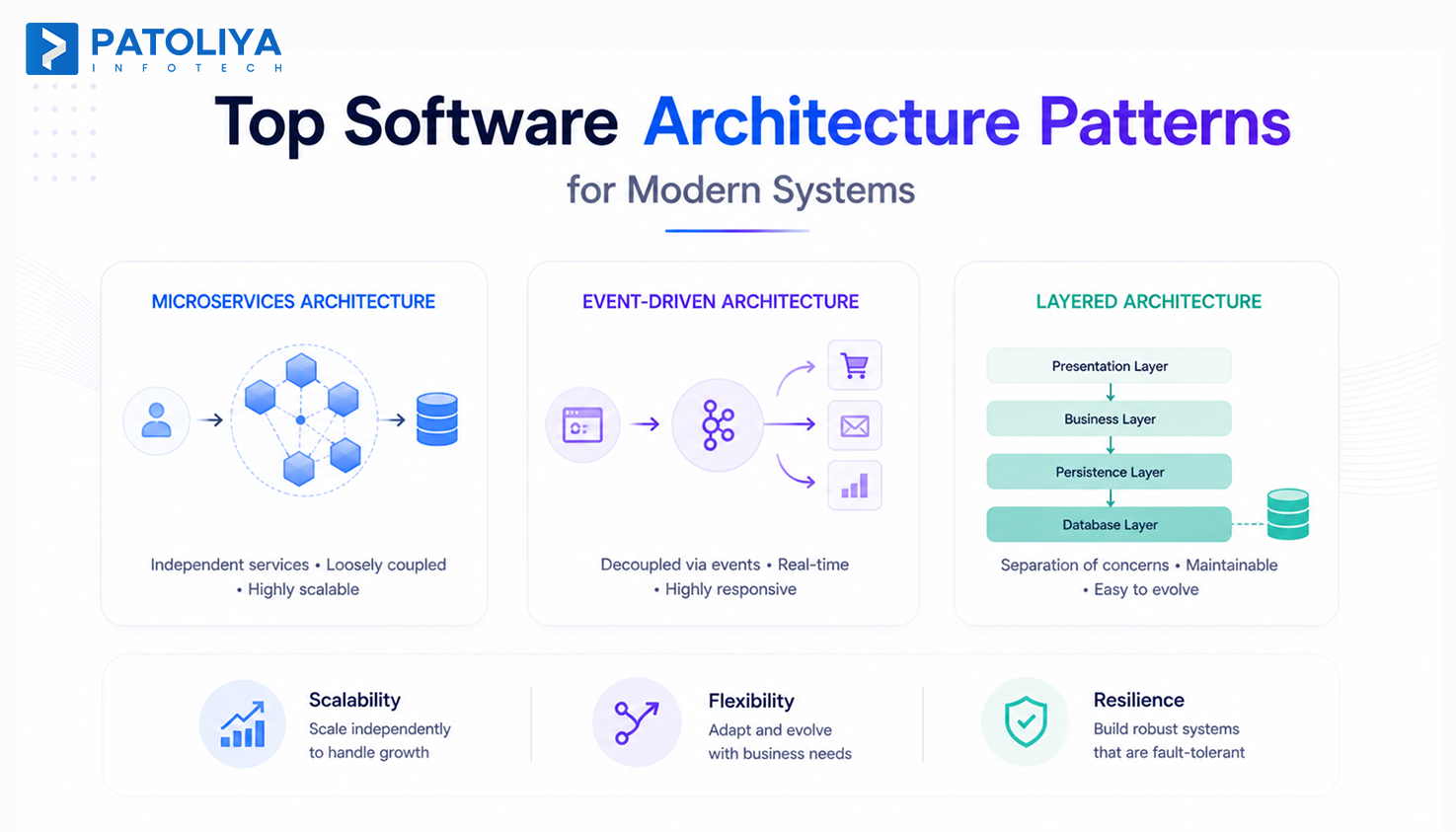

Microservices Architecture

Microservices architecture is the structural foundation of cloud native applications. Instead of one large codebase, the system is broken into independent services, each responsible for a specific function like authentication, payments, or notifications. For instance, OTT platforms run hundreds of microservices. When their subtitle service fails, video playback continues uninterrupted.

Why it works:

- Each service can be developed in a different language or framework

- Teams deploy updates to one service without touching others

- Failures are isolated. A bug in the recommendation engine doesn't take down checkout

These modular systems are commonly delivered through structured web application development services designed for scalable SaaS and enterprise platforms.

Kubernetes and Container Orchestration

Containers package cloud native applications with everything they need to run code, dependencies, and configuration. But managing hundreds of containers manually is impossible. Kubernetes orchestration automates deployment through rolling updates, handles scaling under load, and restarts failed containers automatically.

Official Kubernetes documentation provides detailed guidance on orchestration best practices and workload management. For instance, a media service platform uses Kubernetes to manage thousands of microservices architectures, scaling them based on listener traffic patterns.

Serverless Computing

Serverless computing removes infrastructure management entirely for cloud native applications. Code runs in response to events like API calls, file uploads, or database changes, and scales automatically. Teams pay only for execution time, making it ideal for event-driven workloads like image processing or scheduled jobs.

For instance, a retail company using microservices architecture triggers a serverless function every time an order is placed, helping resize product images and update inventory in real time.

CI/CD Pipelines and DevOps Culture

CI/CD pipelines keep cloud native applications moving at speed by running every code commit through automated builds, tests, and deployments. DevOps culture ties this together. When development and operations share pipeline ownership, feedback loops tighten, release cycles compress from weeks to hours, and teams ship higher-quality code with fewer rollbacks.

What To Expect From Cloud Native Trends in 2026

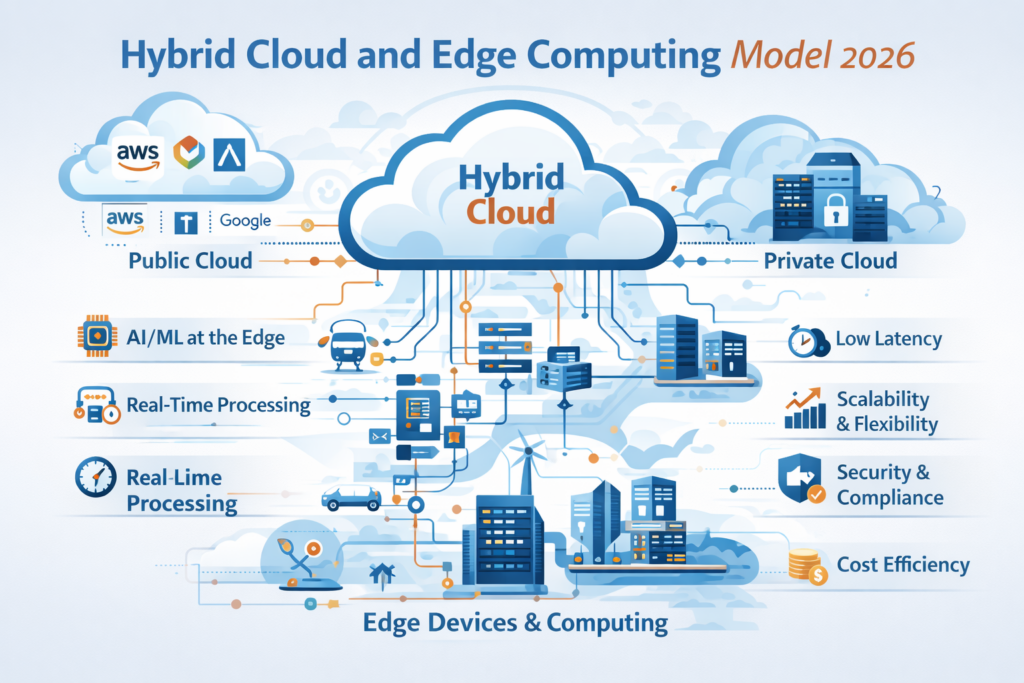

Hybrid and Multi Cloud Strategy

Few organizations rely on a single cloud provider for cloud native applications. Workloads are now spread across AWS, Azure, Google Cloud, and on-premises systems based on cost, compliance, and latency needs. Data sovereignty laws, vendor lock-in risks, and workload-specific strengths are pushing this shift. Cloud native development makes it work because containerized services move across environments without major rearchitecting. Many enterprises adopt this model with guidance from experienced cloud consulting services teams to reduce architectural risk.

AI and Machine Learning Integration

AI in cloud native stacks is becoming an embedded layer for cloud native applications. Teams are using ML models to predict traffic spikes, automate anomaly detection in logs, and dynamically adjust resource allocation. For instance, A SaaS platform can pre-warm containers before peak usage hits, cutting cold start latency without any manual effort.

Edge Computing in Cloud Native Apps

Edge computing trends 2026 push computing closer to where data is generated. Edge nodes process data locally, cutting latency for connectivity devices, retail kiosks, and connected vehicles. This also reduces bandwidth costs and keeps systems running even when central connectivity drops in cloud native applications. Teams increasingly combine edge nodes with serverless computing to handle event-driven workloads at the source. Cloud and edge now function as one connected continuum.

Focus On Developer Experience

Cloud native infrastructure only delivers value if developers can actually use it in cloud native applications. Platform teams are building internal developer platforms that simplify Kubernetes complexity. Tools like Backstage, Telepresence, and Crossplane give developers self-service interfaces that reduce configuration overhead, shorten onboarding time, and let teams focus on building rather than managing infrastructure.

Common Challenges and How Teams Overcome Them

Managing Distributed Complexity

Managing many independent services sounds clean in theory, but it creates real coordination overhead. Cloud native applications built on a microservices architecture require teams to handle service discovery, inter-service communication, and distributed configuration at once. Tracing a failure across dependent services becomes genuinely difficult.

Teams overcome this by adopting service meshes like Istio, which manage retries and load balancing at the infrastructure level. Clear API contracts and documentation standards reduce friction as systems grow.

Security Concerns And Zero Trust Approaches

Cloud native applications introduce security challenges that traditional perimeter models were never built for. Trusting anything inside a dynamic network of dozens of services is a risk. Many organizations align their security models with the recognized zero trust security framework to strengthen distributed system protection.

Zero trust security addresses this by requiring continuous validation of every request, regardless of origin. Teams implement mutual TLS, enforce least-privilege access, and use tools like Vault to prevent credentials from being exposed in configuration files.

Observability And Debugging In Distributed Systems

When something breaks across cloud native applications running on microservices architecture, pinpointing the cause is far harder than reading a single log file. Observability tools, including distributed tracing, structured logging, and metrics dashboards, give teams visibility into how requests move through the system.

OpenTelemetry standardizes instrumentation while platforms like Grafana surface anomalies fast. Without this foundation, debugging a latency spike across multiple services becomes a slow and costly process.

Best Practices For Building Cloud Native Applications

Design For Failure And Resilience

Systems that cannot handle partial failure will always disappoint users at the worst moments. Cloud native applications built on microservices architecture should be designed assuming individual services will fail at some point.

Patterns like circuit breakers, retries with exponential backoff, and graceful degradation keep the overall system functional even when one component struggles. Teams that build with failure in mind ship experiences that stay reliable under real pressure.

Automate Infrastructure And Deployments

Infrastructure as Code tools like Terraform and Pulumi let teams define cloud environments in version-controlled files, making cloud application scalability repeatable and auditable.

Cloud native applications benefit from automated provisioning because it eliminates manual configuration errors and ensures consistency across development, staging, and production. Combining this with CI/CD pipelines and serverless computing means deployments happen through tested workflows rather than error-prone manual steps, with functions scaling automatically without any infrastructure overhead.

Embed Security Early In Development

Embedding security early through DevSecOps practices means vulnerability scanning, dependency checks, and policy enforcement happen inside the pipeline rather than after deployment.

Cloud native applications stay more secure when secrets are managed centrally, container images are scanned before release, and compliance checks run automatically with every build. Environments that rely on serverless computing benefit further since the underlying infrastructure is managed by the provider, reducing the attack surface teams need to monitor and maintain.

How Patoliya Delivers High Performance Cloud Native Applications

Patoliya builds cloud native applications using microservices architecture that breaks systems into independently deployable services, giving teams the flexibility to develop, test, and release each component without disrupting the rest.

Containerization with Kubernetes ensures workloads run consistently across environments and scale automatically under demand, while DevOps-driven CI/CD pipelines compress release cycles and reduce the risk of manual deployment errors.

The team brings deep expertise across AWS and Azure cloud environments, designing systems with API-first principles that make integrations clean and maintainable from day one. Observability implementation is built into every engagement, giving clients real-time visibility into system health through structured logging, distributed tracing, and metrics dashboards.

Patoliya infotech builds resilient cloud native applications with cost-efficient architecture and a strong focus on containerization benefits, ensuring every system performs reliably at scale and adapts as business requirements evolve.

Organizations often benefit from having experts evaluate their cloud modernization roadmap to identify scalability gaps and operational risks.

Conclusion

Cloud native applications are now foundational for modern software teams that need speed, scalability, and reliability. Understanding microservices architecture, containerization, serverless computing, and CI/CD pipelines gives developers and business leaders the foundation to make confident architectural decisions. The trends shaping 2026, from hybrid cloud strategy to AI-driven autoscaling and edge computing, reward teams that have invested in cloud native fundamentals. Whether you are starting fresh or modernizing an existing system, the practices and technologies covered in this guide provide a clear path forward. The organizations moving fastest today are not the largest ones; they are the ones that built their software or cloud native applications to be adaptable from the start.