Vibe Coding in 2025: What It Is, How It Works, and Whether Your Team Should Adopt It

Table of Contents

TLDR;

Vibe coding is how developers build software today, describe what you want in plain English, and AI writes the code. GitHub's 2024 Developer Survey found 92% of US developers already use AI-assisted coding tools. The adoption is happening. The question is whether your team is doing it with structure or just vibing.

92% of US developers use AI-assisted coding tools today. Yet most engineering leaders cannot tell you which parts of their codebase an AI wrote last sprint. That gap is what makes vibe coding dangerous when it is unmanaged and powerful when it is structured.

Vibe coding is not a specific product. It is a workflow where natural language programming replaces manual syntax writing as the developer's primary interface. Andrej Karpathy named it in February 2025. Engineers had been doing it unnamed for over a year before that.

Most teams are adopting LLM code generation reactively. This guide covers what vibe coding actually does, what it costs, where it fails, and how to decide if it fits your pipeline.

What Is Vibe Coding? Definition, Origins, and Scope

Vibe coding is a development method where a developer describes intended behavior in plain language and an AI model generates the code. Full functions, components, and modules, without writing syntax manually with natural language programming.

Andrej Karpathy coined the term in February 2025. His framework: describe what you want, accept the output, treat bugs as new prompts. Engineers had been doing this vibe coding for over a year before it had a name.

What separates vibe coding from autocomplete is scope. Autocomplete finishes a line. Vibe coding handles a feature.

What Vibe Coding Covers Today

| Category | What the Developer Does | What the AI Does |

| Greenfield features | Write a plain English description | Generates a full function or component |

| Codebase modification | Describes the change needed | Reads context, updates across files |

| Test generation | Points at existing function | Writes unit tests with edge cases |

| Documentation | Identifies the module | Generates JSDoc, README, API reference |

LLM code generation powers all four. Each carries a different risk profile. Each requires a different LLM code generation review standard. Treat them that way.

The three adoption mistakes teams consistently make:

- Applying the same review standard to all four categories above.

- Skipping prompt engineering standards and treating every developer's prompting style as equivalent.

- Rolling out AI-assisted coding tools before configuring privacy and telemetry settings.

Understanding the category of work you are applying vibe coding to is where every smart adoption conversation starts.

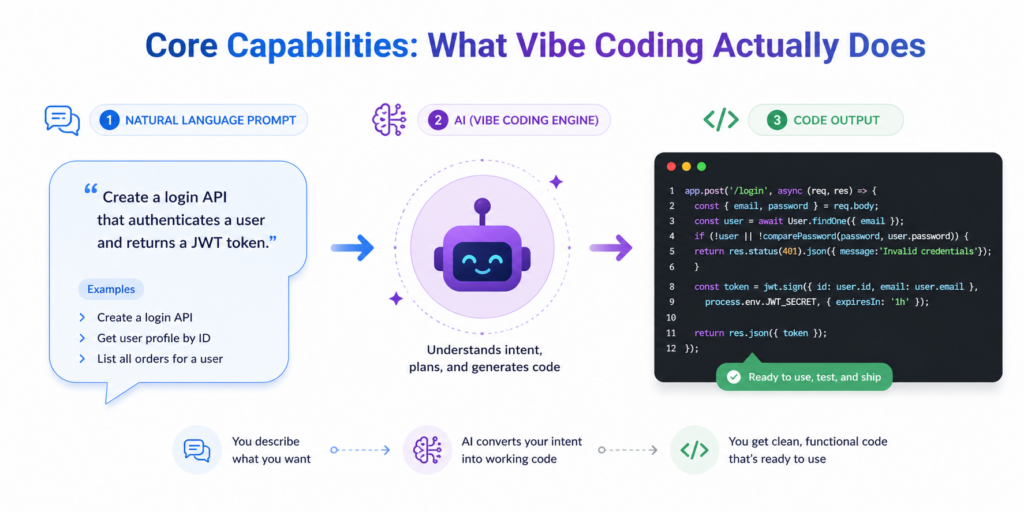

Core Capabilities: What Vibe Coding Actually Does

Prompt to Function Generation

Output quality depends on two things:

- Context richness: how much the model knows about your stack, conventions, and existing patterns.

- Prompt precision: how specifically you describe behavior, inputs, outputs, and edge cases.

A generic prompt produces generic code. Include your naming conventions, a similar existing example, and clear failure cases, and you get output that a senior developer would not be embarrassed to review.

Context-Aware Codebase Reasoning

Modern AI-assisted coding tools read your existing files before generating output through vibe coding. Cursor IDE holds up to 200,000 tokens of context; GitHub Copilot Enterprise uses repository-level indexing. Practical results:

- One prompt of natural language programming updates a function consistently across multiple files.

- Output follows your naming conventions without explicit instruction.

- AI flags dependencies already present rather than importing new ones.

Tasks that previously required thirty minutes of cross-file work now take a single prompt and a ten-minute review.

Automated Test and Documentation Generation

This is the lowest-risk, fastest-return entry point for any organization:

- Point AI-assisted coding tools at an existing function.

- Request unit tests with specified edge cases.

- Review output for coverage gaps.

- Merge through the standard PR process.

Full JSDoc blocks, README sections, and API references are generated in seconds with natural language programming. Teams of vibe coding, carrying documentation, and test debt see immediate returns here without taking on architectural risk.

Multi-Language and Framework Coverage

Code generation AI supports Python, TypeScript, JavaScript, Go, Rust, and SQL at high reliability. Legacy languages like COBOL and ABAP have limited support and higher error rates. Teams with significant legacy codebases should pilot vibe coding on a non-critical module before broad adoption.

The Problem Vibe Coding Solves: Four Operational Challenges

Problem 1: Developer Time Wasted on Boilerplate and Repetitive Code

30 to 40% of developer time goes to low-judgment repetitive code: CRUD endpoints, form validation, config files, schema migrations.

A developer who spent two hours setting up a REST endpoint now reviews Vibe coding output in ten minutes. That time compounds across every sprint.

The risk is real, too. Developers who stop writing boilerplate stop owning it. A review standard for LLM code generation output in foundation code is non-negotiable.

Problem 2: Onboarding Velocity for New Hires and Context Switching

New hires on complex codebases take 4 to 8 weeks to contribute meaningfully. With vibe coding and an indexed codebase, that window shrinks. New developers can:

- Ask the AI or natural language programming to explain unfamiliar modules.

- Generate implementations that match existing patterns.

- Learn conventions without pulling senior engineers away.

Senior developers stop answering the same onboarding questions every six weeks. That time adds up.

Problem 3: Uneven Skill Distribution Across Teams

Every engineering team looks the same: a few architects and many executors. Vibe coding narrows that gap.

Developer productivity gains are largest for mid-level developers applying LLM code generation to well-defined tasks within established patterns. The more architectural the task, the smaller the gain.

Problem 4: Documentation and Test Debt

Tests and documentation lose every prioritization fight because they don't ship features. Vibe coding changes that. Writing tests become a prompt that runs while you review the PR.

Teams using AI-assisted coding tools for test generation specifically report the clearest, fastest quality improvements with the lowest adoption risk with natural language programming.

Vibe Coding vs. Alternatives: Market Context and Competing Approaches

Vibe Coding vs. Traditional IDE Autocomplete

Traditional autocomplete works inside your existing thought process. It completes the syntax you're already writing.

Vibe coding through natural language programming replaces part of that thought process entirely. AI-assisted coding tools using LLM code generation let developers produce code in patterns they've never written before. That's more powerful. It's also where hallucination risk lives.

The evaluation standard changes when you're reviewing natural language programming output versus syntax completion.

Vibe Coding vs. No-Code and Low-Code Platforms

| Dimension | Vibe Coding | No-Code / Low-Code |

| Who uses it | Developers | Non-technical users |

| Output | Code you own | Platform-locked configuration |

| Flexibility | Full language coverage | Limited to the platform |

| Scaling ceiling | None | Hits limits on complex logic |

| Vendor dependency | Tool dependency only | Runtime dependency |

No-code adjacent development solves a different problem. Conflating the two leads to buying a solution for a problem you don't have.

Vibe Coding vs. Offshore Augmentation

Offshore adds headcount. Vibe coding reduces the headcount required for the same output. These are not substitutes.

AI pair programming changes the math. A team of five using AI-assisted coding tools effectively doesn't replace fifteen developers. It changes what five developers ship per sprint through natural language programming.

The right comparison is cost per feature over 12 months. Offshore carries coordination overhead and communication latency. Vibe coding carries adoption overhead and review discipline requirements. Model both before deciding.

The real question: where does LLM code generation and vibe coding offer the most leverage for your current team?

Comparison Table: Vibe Coding vs. Adjacent Approaches

| Dimension | Vibe Coding | Traditional Autocomplete | No-Code / Low-Code | Offshore Augmentation |

| Who uses it | Developers | Developers | Non-technical users | Engineering managers |

| Output type | Production code you own | Syntax completion | Platform-locked configuration | Developer hours |

| Flexibility | Full language and framework | Within the existing file | Limited to the platform | Depends on the partner |

| Scaling ceiling | None | None | Hits limits on complex logic | Cost scales linearly |

| Vendor dependency | Tool only | Tool only | Runtime dependency | Relationship dependency |

| IP ownership | Yours with review | Yours | Shared with the platform | Contractual |

| Best fit | Teams with developers wanting speed | Developers wanting syntax help | Non-technical builders | Teams needing raw headcount |

| Risk profile | Hallucination, IP, skills atrophy | Low | Vendor lock-in | Coordination, quality variance |

Vibe Coding Tooling Cost: What Teams Actually Pay

Vibe coding tool costs follow a predictable tier structure. What teams consistently underestimate is not the license cost. It is everything that sits on top of it.

Tier 1: Individual Developer Licenses

Individual licenses for Vibe coding tools run between $10 and $39 per month per seat.

GitHub Copilot Individual: VS Code, JetBrains, Neovim integration.

Cursor Pro: multi-file context, model switching between Claude and GPT-4o.

Tabnine Basic: free tier available.

Every vibe coding adoption should start here. One developer with a non-critical project and four weeks. Teams that jump straight to team rollout consistently hit avoidable problems a pilot would have caught.

Tier 2: Team and SME Deployment

Team and SME deployment for AI-assisted coding tools runs between $400 and $4,000 per month, depending on seat count and feature tier.

GitHub Copilot Business: policy controls, audit logs, organization-level management.

Cursor Business: privacy mode, SSO, team-level context sharing.

Tabnine Teams: shared context, team training on your codebase.

Moving to this tier introduces data governance questions and vendor terms that need legal review. Don't skip it.

Tier 3: Enterprise-Scale Deployment

Custom contracts for AI-assisted coding tools covering compliance needs, custom model access, and on-premise deployment via Tabnine Enterprise for air-gapped environments.

The Costs Teams Miss

| Cost Category | Typical Range | Usually Planned? |

| Tool licenses | Per seat monthly | Yes |

| Onboarding and training | $2,000 to $8,000 | Rarely |

| Prompt engineering standards | 20 to 40 hours of engineering time | No |

| CI/CD review gate setup | 10 to 20 hours DevOps time | No |

| Legal review of vendor terms | $1,500 to $5,000 | No |

The license is the visible cost. These hidden costs determine whether vibe coding delivers ROI or creates confusion. Budget for all five before presenting a rollout plan to leadership.

ROI and Business Impact: Quantified Returns

Developer Productivity Gains

GitHub's 2024 Copilot research found that developers using AI-assisted coding tools completed tasks 55% faster than those without. Gains were highest on repetitive implementation and lowest on architectural decisions.

Vibe coding does not speed up system design meetings. It speeds up everything that requires typing across any natural language programming workflow. Set that expectation before rollout, or the disappointment is predictable.

Time to Market Impact

A ten-person team shipping 30% more code per sprint sees real compounding effects:

- Features stop waiting on one blocked developer.

- Review cycles shorten when output is consistent.

- The gap between spec and working prototype shrinks.

For two-week sprint teams, structured vibe coding adoption typically delivers one additional feature per sprint cycle within 8 to 12 weeks.

Scalability Economics

Traditional development scales linearly with headcount. LLM code generation partially breaks that relationship.

A team using AI-assisted coding tools effectively scales output without proportional headcount growth. Model a 12-month cost comparison between two junior hires and the Vibe coding tooling before deciding. The math changes significantly depending on your sprint velocity and company size.

Reduction in QA and Rework Costs

AI-generated tests catch more edge cases than manually written tests produced under sprint pressure.

Teams using natural language programming specifically for test generation report the clearest quality improvements with the lowest adoption risk. LLM code generation in testing is the entry point with the most defensible ROI before any broader vibe coding commitment.

All four LLM code generation returns hinge on one thing: review discipline. Without it, development speed gains become QA rework costs.

Risks and Challenges Teams Must Address

Vibe coding carries four risk categories. None are reasons to avoid it. All are reasons to plan before you roll out AI-assisted coding tools at scale.

Code Quality and Hallucination Risk

AI models generate plausible code. Not necessarily correct code.

Syntactically valid vibe coding output can carry logic errors and wrong algorithmic assumptions that pass a rushed review and break in production.

The fix: treat natural language programming output like a contractor's first PR. Read it. Test it. Don't assume it's correct because it compiles. Teams that set this standard before rollout maintain quality. Teams that set it after an incident maintain frustration.

Intellectual Property and Licensing Exposure

The Doe v. GitHub litigation raised unresolved questions highlighting ongoing legal uncertainty in AI-generated code ownershipPatoliya Infotech is worth a direct conversation, about whether AI-assisted coding tools trained on public repositories create licensing obligations for output resembling training data. No definitive ruling exists as of 2025.

Practical steps before any enterprise rollout:

- Review vendor terms for indemnification clauses

- Avoid AI-assisted coding tools in GPL-sensitive domains

- Get legal review in any regulated industry

GitHub Copilot Enterprise and Amazon CodeWhisperer Professional offer IP indemnification. Most tools do not.

Security and Data Leakage

Every prompt sent to a cloud-hosted Vibe coding tool carries information about your codebase. Proprietary business logic, auth implementations, and customer data handling code leave your environment the moment you prompt.

Configure telemetry-off modes before enabling developer access to any natural language programming tool. Reversing this sequence creates an exposure window that's hard to audit retroactively.

Skill Atrophy in Junior Developers

Natural language programming is most valuable to developers who already understand what good code looks like. When it substitutes for building that skill rather than accelerating it, you accumulate invisible debt.

Vibe coding workflows for junior developers must include regular exercises where AI-assisted coding tools are off, and the developer writes from scratch. Natural language programming should accelerate skill development, not replace it.

Vendor Selection Checklist: Evaluation Framework

Before signing any AI-assisted coding tools contract, verify:

- Does it support your primary languages at Tier 1 coverage?

- What's the context window size? Larger means better multi-file LLM code generation

- Is telemetry-off mode available?

- Is a data processing agreement available? Required for GDPR, HIPAA, SOC 2?

- Does the vendor offer IP indemnification?

- Which IDEs does it support?

- Is on-premise deployment available?

- What audit and logging capabilities exist?

- What is the vendor's model transparency policy?

- Does it offer usage analytics?

Vendors design demos to show strengths. This checklist surfaces the gaps that matter. Vibe coding tool selection carries legal and security implications. Treat it as a procurement decision, not a developer preference.

Leading Vibe Coding Tools and Platforms: Vendor Profiles

The AI-assisted coding tools market has consolidated around four platforms covering the majority of enterprise deployments. Each serves a different primary use case.

GitHub Copilot

Market leader in installed vibe coding seat count. Integrates natively into VS Code, JetBrains, and Neovim.

Business and Enterprise tiers add repository-level indexing, PR summaries, and IP indemnification, one of the few AI-assisted coding tools that offer this.

Best for: Teams wanting LLM code generation inside existing GitHub review workflows.

Pricing: $10/month individual, $19/seat Business, Enterprise custom.

Rating: 4.5/5

The context window is smaller than the cursor. Most consistent enterprise complaint.

Cursor IDE

A VS Code fork built specifically for vibe coding. Holds more context than GitHub Copilot. Switches between Claude and GPT-4o as the underlying model.

Agent mode runs terminal commands, executes tests, and iterates on failures autonomously.

Best for: Developers wanting the highest ceiling on prompt-driven development for complex multi-file tasks.

Pricing: $20/month Pro, $40/seat Business

Rating: 4.7/5

Highest raw capability. Steeper learning curve without prior prompt engineering experience.

Amazon CodeWhisperer

Built-in OWASP Top 10 scanning and reference tracking for open-source training data. The most direct IP risk mitigation in code generation AI today.

Best for: AWS-heavy teams and regulated industries requiring security scanning.

Pricing: Free tier, $19/user.

Professional Rating: 4.2/5

LLM code generation quality rated below Copilot and Cursor in independent surveys.

Tabnine Enterprise

The only major AI-assisted coding tools platform supporting fully on-premise deployment. Trains on your codebase for organization-specific natural language programming suggestions. Covers 30+ languages, including legacy support.

Best for: Government, financial services, healthcare teams where code cannot leave the environment.

Pricing: Free basic, $15/seat Teams, Enterprise custom

Rating: 4.1/5

Highest compliance rating. Raw suggestion quality is below Copilot and Cursor.

Why Patoliya Infotech for AI-Integrated Software Development

Most organizations face the same gap: AI-assisted coding tools are available, but the implementation structure that makes LLM code generation safe in a real delivery pipeline is not.

Patoliya Infotech works at the intersection of vibe coding and production-grade software delivery:

- Workflow assessment before tooling: We evaluate your pipeline, stack, and team before recommending which tools fit and which create risk

- Prompt engineering standards: System prompt templates, review gate definitions, and CI/CD integration guidelines for consistent, reviewable output

- Security and compliance configuration: Privacy modes, data processing agreements, and telemetry settings configured before the first developer prompt hits a vendor server

Teams that adopt natural language programming without this infrastructure ship fast for six weeks and spend three months cleaning up code nobody can explain.

Conclusion

Vibe coding is a workflow shift, not a productivity shortcut. The teams that see real returns are not the ones that adopted fastest. They are the ones who adapted with structure.

That means AI-assisted coding tools are treated as a discipline, not a convenience. Review standards defined before the first prompt hits production. Prompt engineering conventions your whole team follows. Clear boundaries on where LLM code generation owns the output and where human judgment still does.

92% of US developers already use AI-assisted coding tools. The adoption is not coming. It is already in your codebase. The only question is whether your vibe coding workflow is structured or accidental.

The capability is mature. The question is whether your team is set up to use it that way. Let's find out together.