What Is Synthetic Data Generation? Use Cases & Tools

Table of Contents

TLDR: Synthetic data generation creates statistically realistic datasets with zero real personal records, solving data scarcity, labelling cost, and compliance in one move. Adoption is accelerating as teams prioritize automation, visibility, and cost-efficient scaling. This guide covers how it works, which tools lead in 2026, and what implementation actually costs.

Synthetic data AI is becoming essential for modern model development. Real datasets are costly to label, restricted by GDPR and HIPAA, and often too imbalanced for production use. Teams relying only on real data face delays in training and deployment. Teams waiting for clean real data miss deployment windows that their competitors do not.

Synthetic data AI removes that bottleneck at the source. This guide covers what synthetic data generation is, how it works, which tools dominate in 2026, and what it costs to implement at scale to generate test data. By the end, you will have a vendor comparison framework, a cost benchmark, and a checklist to evaluate synthetic data generation solutions for your pipeline.

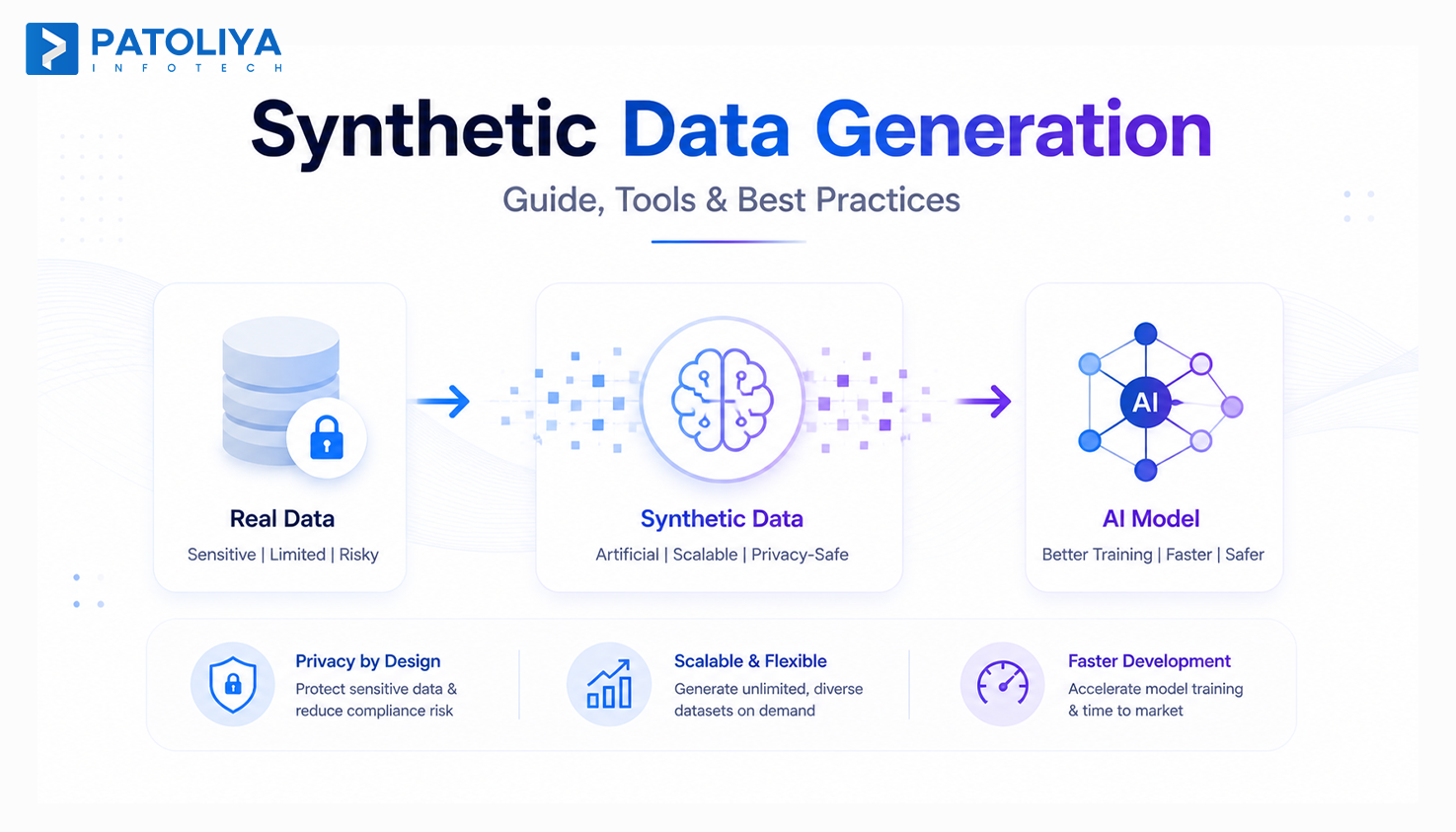

What Is Synthetic Data Generation?

Synthetic data generation is the process of creating artificial datasets that mirror the statistical properties and relationships of real data without containing any actual personal records.

The mechanism depends on the data type. For tabular data, GANs and VAEs learn underlying distributions and produce new rows. For images, diffusion models generate pixel-level, realistic outputs. For text, fine-tuned LLMs produce privacy-compliant data at scale.

What separates synthetic data generation from random data generation is fidelity. A good synthetic data AI system preserves the correlation between age and income, or between transaction frequency and fraud probability, while generating records that never existed.

The question for engineering teams is not whether synthetic data generation works. Waymo runs the majority of its object detection training on synthetic images. The question is which modality and compliance architecture fits your pipeline.

Core Capabilities: What Synthetic Data Generation Actually Does

Tabular Data Synthesis

Tabular synthetic data generation is the most mature modality available today. Platforms like MOSTLY AI and Gretel.ai produce realistic synthetic records from structured CSVs and SQL exports while preserving referential integrity across tables.

Teams generate test data synthetically in hours instead of waiting days on masking workflows that break foreign key relationships anyway.

Image and Video Synthesis for Computer Vision

Computer vision pipelines need a labeled image volume that manual annotation cannot supply fast enough.

Synthetic data AI using diffusion models generates pre-labeled training images at scale, helping teams use fake data for machine learning across rare-class edge cases.

Data augmentation through synthetic data generation fills class gaps for low-light detection, manufacturing defect inspection, and autonomous vehicle perception models.

Text and NLP Synthetic Data

NLP teams rely on fake data for machine learning to produce domain-specific corpora and adversarial examples without exposing proprietary data.

Fake data for machine learning in text form covers low-resource language gaps and fine-tuning needs that real data collection cannot fill at a reasonable cost or within compliance boundaries in regulated industries.

Time-Series and Event Stream Synthesis

Financial transaction streams and IoT logs are difficult to share due to compliance constraints. Synthetic data generation for time-series enables teams to generate test data while preserving temporal patterns, seasonality, and anomaly distributions.

This makes it viable as model training data for fraud detection and predictive maintenance, where real data access requires weeks of legal approval before a single training run starts.

Key Problems Solved by Synthetic Data Generation

Problem 1: Training Data Scarcity in Regulated Industries

- Healthcare and finance teams cannot share patient records or transaction histories across model development environments.

- Synthetic data AI generates GDPR safe testing datasets that are statistically equivalent to real records but legally outside the personal data processing scope.

- A radiology team training a tumor detection model generates thousands of synthetic scan images instead of waiting months on hospital data governance committees for access approval.

Problem 2: Class Imbalance Degrading Model Performance

- Fraud represents 0.1% of real transaction data. A model trained on that distribution predicts "not fraud" almost universally and still scores high accuracy while being operationally useless.

- Synthetic data generation creates minority-class examples at any ratio needed. Teams generate test data for rare classes until the dataset is balanced.

- Fake data for machine learning supports model accuracy without real data dependency. No real records are touched, and no compliance review is triggered.

Problem 3: Slow Test Environments Due to Masked Real Data

- Masking production data for QA takes days of engineering time, breaks referential integrity across tables, and still carries residual re-identification risk.

- Fake data for machine learning and QA environments generated synthetically maintains structural integrity across foreign keys and deploys in hours.

- Teams using synthetic data generation for test environments report eliminating 2 to 4 days of pre-sprint data preparation per release cycle.

High Cost and Latency of Manual Data Labeling

- Manual annotation costs $0.05 to $0.15 per label for basic classification. A 500,000-image dataset runs $25,000 to $75,000 in labeling spend.

- Synthetic data generation produces pre-labeled data by design. Every generated image or record carries its ground-truth label automatically, eliminating that cost line.

- Data anonymization workflows that consume labeling budgets are replaced entirely when teams adopt an AI-first synthetic data pipeline.

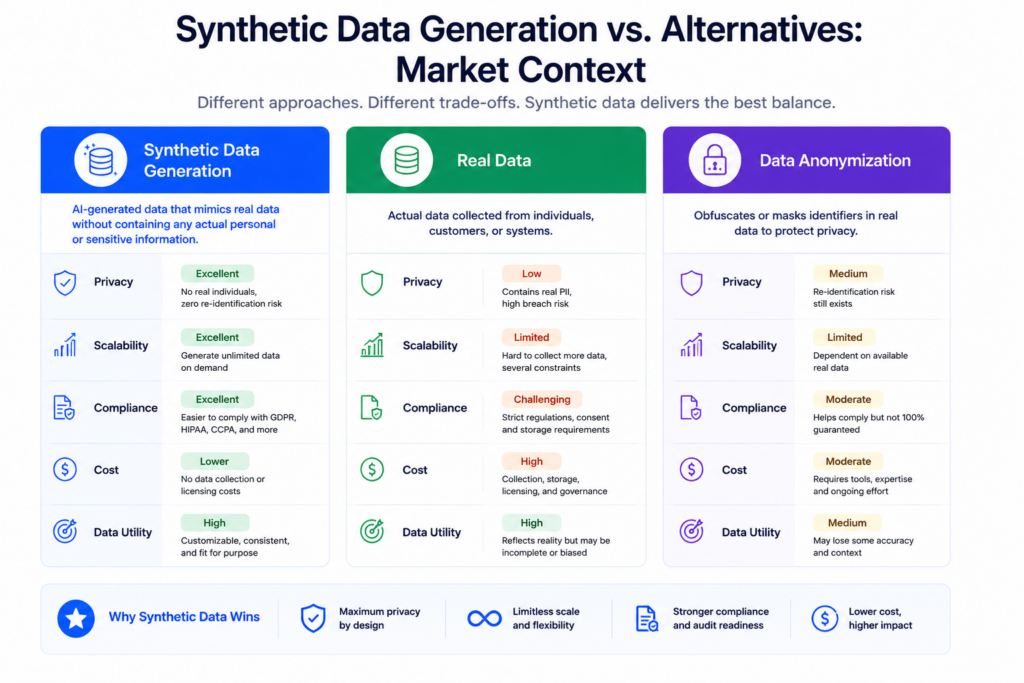

Synthetic Data Generation vs. Alternatives: Market Context

Synthetic Data vs. Data Anonymization

Data anonymization removes identifiers but still carries re-identification risk. Fake data for machine learning avoids this entirely by never using real records.

- Data anonymization strips personal identifiers but remains vulnerable. A 2019 MIT study found 99.98% of Americans re-identifiable using just 15 demographic attributes.

- Synthetic data generation creates records from scratch, removing GDPR personal data processing obligations and exposure to re-identification simultaneously.

Synthetic Data vs. Data Augmentation

Data augmentation improves existing data. Synthetic data generation expands beyond its limits entirely.

- Data augmentation modifies real examples through rotation, cropping, or synonym replacement. It is bounded by your original dataset and inherits every bias within it.

- Synthetic data AI generates new distributions, making it structurally stronger for class imbalance and rare-event scenarios where real data simply does not exist.

Synthetic Data vs. Real Data Collection

Real data collection remains the fidelity standard. Synthetic data generation solves the timing and access constraints that block it.

- Real data works best when available, compliant, and sufficient in volume. In regulated industries, those three conditions rarely align at the same time.

- Fake data for machine learning removes development delays while real data collection continues. Most mature ML teams use data generation to accelerate development and real data for final production validation.

Synthetic Data Generation Pricing and Cost Breakdown

SaaS Platform Pricing (Subscription Model)

| Platform | Free Tier | Mid Tier | Enterprise |

| MOSTLY AI | Yes | ~$500/month | Custom |

| Gretel.ai | Yes | $500–$2,000/month | Custom |

| YData | Yes (open source) | $300–$1,500/month | Custom |

| Hazy | No | From $2,000/month | Custom |

| Synthesis AI | No | Custom only | Custom |

Managed Service Pricing

Custom synthetic data generation implementations run $30,000 to $100,000 for initial scoping and generative model training. Ongoing maintenance costs $2,000 to $5,000 per month. Source: Clutch and GoodFirms AI benchmarks, Q1 2025. These apply to full-stack builds. SaaS-only deployments for teams just starting to generate test data synthetically sit at the lower end.

Hidden Costs to Budget For

Three cost lines teams consistently miss:

- GPU compute for image and video synthetic data generation: $500 to $3,000 per month.

- Bias auditing tooling: $200 to $800 per month.

- Pipeline integration engineering: 40 to 120 hours one-time.

Contract Models

Most synthetic data AI vendors offer monthly billing with 15 to 25% annual discounts. Enterprise contracts carry minimum row or API call commitments. Verify whether pricing covers generate test data use cases, and production model training separately. Some vendors tier these differently, and the gap between quoted and actual cost at scale is high.

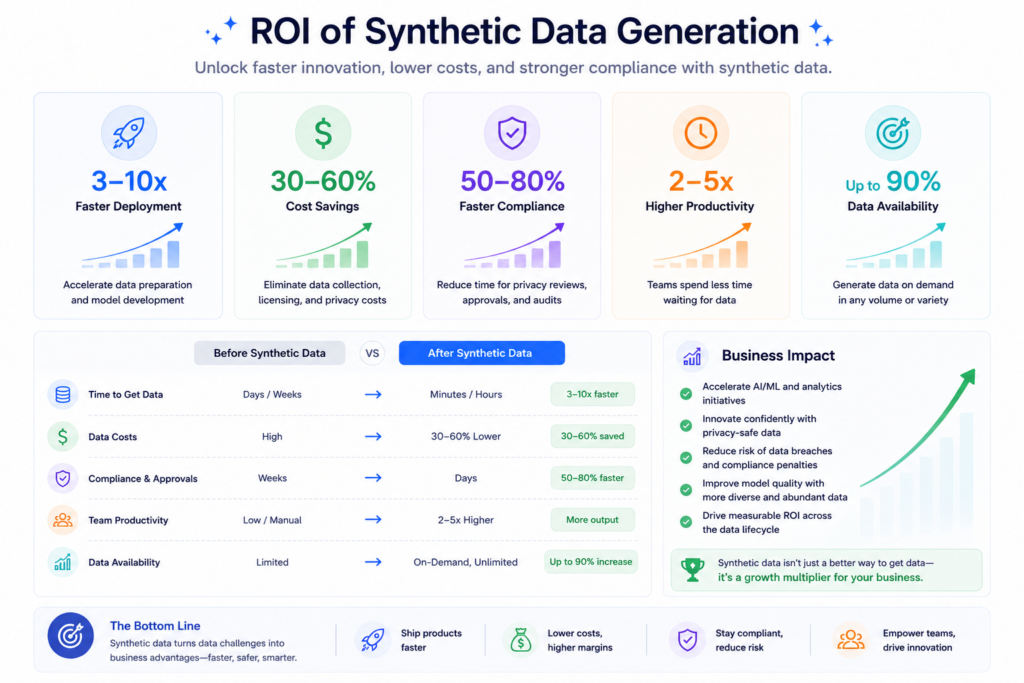

ROI and Business Impact of Synthetic Data Generation

Labeling Cost Elimination

Pre-labeled output from synthetic data generation eliminates annotation spend for generated data. A team producing 200,000 synthetic images avoids $10,000 to $30,000 in labeling costs per training cycle. That saving repeats every cycle with no additional effort to generate test data.

Accelerated Time-to-Model

Data access approvals in regulated industries take 4 to 12 weeks on average. Synthetic data AI removes that dependency completely. Teams begin model development the same week instead of waiting for legal clearance. Fake data for machine learning that required months of procurement can be generated in days.

Compliance Risk Reduction

Synthetic data generation eliminates GDPR processing obligations in development entirely. No real personal data means no breach notification risk and no DPA review before training begins with generate test data. Teams in healthcare and finance using fake data for machine learning report a 60 to 80% reduction in legal review cycles before model approval.

Scalability Economics

Real data collection costs scale linearly with volume. Synthetic data generation scales at marginal cost after the initial generative model is trained. Ten million synthetic rows costs roughly the same compute as one million. For growing model training data requirements, that economic gap compounds with every additional training run.

Risks and Challenges in Synthetic Data Generation

Model Fidelity vs. Privacy Trade-Off

Higher statistical fidelity in synthetic data generation means the output more closely resembles real records, which increases the re-identification risk of fake data for machine learning.

Tools maximizing fidelity for model accuracy can reduce data anonymization strength. Measure both metrics during evaluation. Most platforms let you tune the trade-off explicitly.

Bias Amplification Risk

Synthetic data AI trained on biased real data amplifies those biases in output. A hiring dataset with gender bias produces synthetic records with stronger gender bias.

Bias auditing using Fairlearn or IBM AI Fairness 360 before production deployment is non-negotiable regardless of which synthetic datasets tools you use.

Regulatory Acceptance Gaps

Synthetic data generation is not universally accepted across all regulatory contexts. FDA clinical submissions and some financial filings still require real-data validation alongside fake data for machine learning.

Verify regulatory acceptance for your specific jurisdiction before committing to a synthetic-first pipeline in a compliance-sensitive context of generate test data.

Vendor Lock-In and Portability Risk

Several synthetic data generation platforms use proprietary architectures that complicate migration. Verify that generated data and trained generative models are exportable before signing enterprise contracts.

The ability to generate test data portably matters as much as generation quality when evaluating long-term vendor fit for fake data for machine learning.

Vendor Selection Checklist for Synthetic Data Generation

Run this before any synthetic data generation purchase decision:

- Data modality support confirmed for your stack (tabular, image, text, time-series).

- Fidelity benchmarks tested on your actual data sample, not vendor demos.

- Differential privacy and membership inference testing confirmed.

- GDPR safe testing architecture reviewed by your legal team.

- Bias auditing is built in or compatible with Fairlearn and IBM AI Fairness 360.

- Deployment model matches your infrastructure: cloud API, on-prem, or VPC.

- Generated data and trained models confirmed exportability.

- Pricing verified at your actual target volume, not entry-tier assumptions.

- Regulatory acceptance confirmed for your industry and jurisdiction.

- Vendor financial stability is assessed before deep pipeline integration.

A 2-week paid proof of concept on your real sample data is the only reliable quality test for data generation.

Top Synthetic Data Generation Vendors in 2026

MOSTLY AI

An enterprise synthetic data generation platform for tabular data, widely used across European financial services and insurance.

Key Features:

- Referential integrity is preserved across relational tables automatically.

- Differential privacy controls with configurable epsilon settings built in.

- Smart imputation fills gaps in sparse real datasets during synthetic data AI runs.

Best For: Financial services and insurance teams needing GDPR safe testing data for QA and model training through generate test data.

Pricing: Free tier available. Enterprise from $500/month.

Rating: 4.7/5

Gretel.ai

Developer-first synthetic data generation platform with API and SDK support for tabular and text generation across cloud environments.

Key Features:

- Navigator Fine Tuning for domain-specific fake data for machine learning.

- Differential privacy and membership inference testing on every generation run.

- Python SDK makes generating test data pipeline integration fast.

Best For: ML engineering teams wanting programmable generate test data with strong privacy guarantees baked in.

Pricing: Free tier. Mid-tier from $500/month. Enterprise custom.

Rating: 4.6/5

Synthesis AI

Specializes in synthetic image and video synthetic data generation for computer vision pipelines, including human-centric perception model training.

Key Features:

- Photorealistic synthetic humans with controllable demographic attributes.

- Pre-labeled output eliminates manual annotation for model training data pipelines.

- Scenario scripting for rare-event and edge-case synthetic data generation.

Best For: Computer vision teams needing labeled synthetic datasets tools at scale for detection and perception models.

Pricing: Custom enterprise only.

Rating: 4.4/5

Hazy

Enterprise tabular synthetic data generation built for UK and EU financial institutions with strong FCA and EBA regulatory positioning.

Key Features:

- Regulatory-grade audit trails on every synthetic data AI generation run.

- On-premises deployment for strict data sovereignty requirements.

- Integration with existing data governance and lineage frameworks.

Best For: UK and EU banks where compliance documentation matters as much as data quality for generating test data workflows.

Pricing: Enterprise custom only.

Rating: 4.3/5

YData

Open-source and enterprise synthetic data generation focused on data augmentation for structured and time-series data pipelines.

Key Features:

- YData Fabric open-source framework for self-hosted fake data for machine learning workflows.

- Class balancing automation for imbalanced training datasets.

- Data quality profiling is integrated before and after synthetic data generation runs.

Best For: Teams wanting open-source flexibility with optional enterprise support and no upfront vendor commitment to generate test data.

Pricing: Open source is free. Enterprise from $300/month.

Rating: 4.5/5

Why Patoliya Infotech for Synthetic Data Generation

Building a pipeline that produces fidelity-validated, bias-audited, privacy-compliant data at production scale takes weeks without the right implementation experience.

We implement synthetic data generation infrastructure for ML teams in financial services, healthcare, and enterprise SaaS. Every engagement delivers:

- Modality-matched synthetic data AI selection based on your actual data structure

- GDPR safe testing compliance architecture reviewed before any data is processed

- Bias auditing integrated as a pipeline gate using Fairlearn or IBM AI Fairness 360

Most teams reach a validated proof of concept within 3 to 6 weeks on a scoped delivery timeline.

If your team is evaluating synthetic data generation vendors, let's map your pipeline requirements in one technical call.

Conclusion

Synthetic data generation has moved from experimental workaround to production infrastructure. The market hit $380M in 2024 and is projected to reach $1.1B by 2028. The decision is no longer whether to adopt synthetic data AI, but which modality and vendor model fits your pipeline. Teams still waiting on real data access approvals are losing development cycles that do not return. Schedule a technical scoping call with Patoliya Infotech and receive a benchmarked implementation plan within 5 business days.