Table of Contents

TLDR

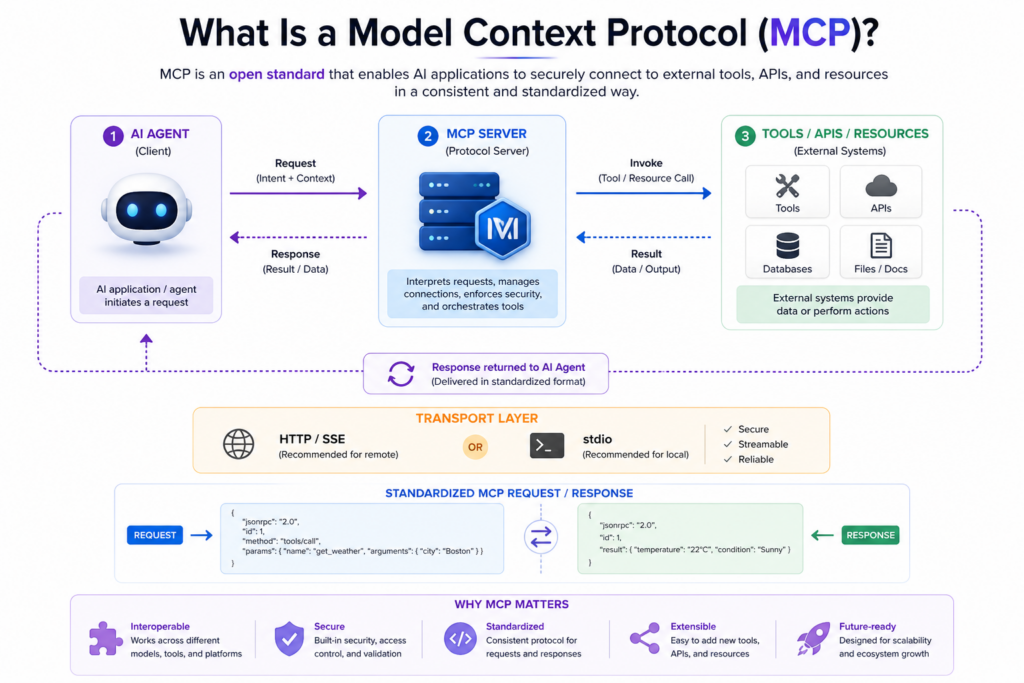

Model context protocol is an open standard that lets AI agents connect to external tools, APIs, and data sources through one consistent interface instead of custom one-off builds. Teams using the MCP server integration stop rewriting tool connections every time they switch models. One server. Any compliant runtime.

Most AI agent projects do not fail at the model layer but at the plumbing. A team spends weeks connecting their LLM tool use to a CRM, then switches models and finds none of that work carries over. The integration was built for a provider, not a standard.

Model context protocol fixes that. It is an open specification, originally released by Anthropic, that defines a shared communication layer between AI agents and the external systems they act on. Any compliant client connects to any compliant server, no custom glue code per combination.

This blog covers what the model context protocol does at the architecture level, how the model context protocol compares to alternatives, what implementation costs are, and how to pick a vendor who can implement the specification correctly.

Model context protocol is a transport-level open standard governing how AI agents request tools, access resources, and exchange data with external systems, regardless of which model is running the agent.

Model context protocol is the agreed-upon contract between an AI agent orchestration and any external system it needs to use, written once and honored by every compliant model and server without rewriting.

Before the model context protocol, every tool integration was a bilateral deal between one model and one system. Switching from GPT-4 to Claude MCP meant rebuilding from scratch. A single MCP server integration replaces that pattern entirely, built once, and every compliant model connects without modification.

Model context protocol is not a framework, library, or plugin format. It is a transport-level specification, the way HTTP defines how browsers and servers communicate, regardless of what either side is built with. AI agent tools connectivity built through this standard is infrastructure, not application logic.

Model context protocol is built around four capability modules that make tool-augmented language models practical. This solves a different piece of the agent-to-system communication problem.

MCP protocol tools give agents the ability to call external functions like search, write, query, and trigger through a defined request or response contract. The model never needs to know what is behind the tool. It sends a structured call; the protocol handles the handoff.

Schema discipline is what separates this from ad-hoc function calling: MCP protocol tools define inputs, outputs, and error states in a standard format that any compliant model reads correctly.

Model context protocol treats external data as first-class resources with addressable URIs. An agent requests a resource; the server resolves and returns it. This is where the MCP protocol tools and AI agent tools connectivity converge for enterprise environments. Agent connectivity can directly interact with databases, document stores, and internal APIs without wrapping each source in custom function calls.

Servers expose reusable prompt structures through the model context protocol that standardize how agents phrase requests to specific tools. Teams running MCP server integration across complex workflows flag this as an underused feature. Without it, the same tool used by different agents produces inconsistent outputs because each agent was prompted differently.

The model context protocol supports one agent invoking another as a tool. This is what makes multi-agent architecture viable at the protocol level rather than requiring a custom message bus on top. Each agent exposes capabilities through a standard MCP server integration, and the calling agent treats it like any other tool.

MCP protocol tools run over two transports: stdio for local deployments and HTTP with Server-Sent Events for remote, networked production environments. AI agent tools connectivity at enterprise scale requires HTTP plus SSE. OAuth 2.1 authentication joined the spec in March 2025. This is making model context protocol viable for regulated industries.

Model context protocol solves four problems teams hit in roughly the same order as their agent systems grow.

A tool set built for one model has zero portability when the model changes. Model context protocol decouples the tool from the model. One MCP server setup integration serves Claude, GPT-4o, Gemini, or any compliant runtime. A tool library that compounds in value rather than depreciating with every model shift.

Without a model context protocol, every vendor invents their own schema and auth pattern. MCP protocol tools replace that with one specification to build against and one surface to audit. Security reviews become manageable because there is a single interface, not a different one per integration.

Ad-hoc AI agent tools' connectivity rarely defines what an agent is allowed to do. Model context protocol includes session-level capability scoping in the spec. An agent sees only the tools to which it has been explicitly granted protocol-level access control; every MCP server integration inherits by default.

As workflows grow, context windows fill with noise from unstructured tool responses. Model context protocol structures what crosses the boundary between external API access for AI systems and the model. The discipline of the MCP protocol tools enforced at the transport layer is what makes large-scale deployments operationally stable.

A single-model, single-tool prototype with no scale plans does not need a model context protocol. The spec earns its value at three or more tools, or when model portability becomes a real requirement.

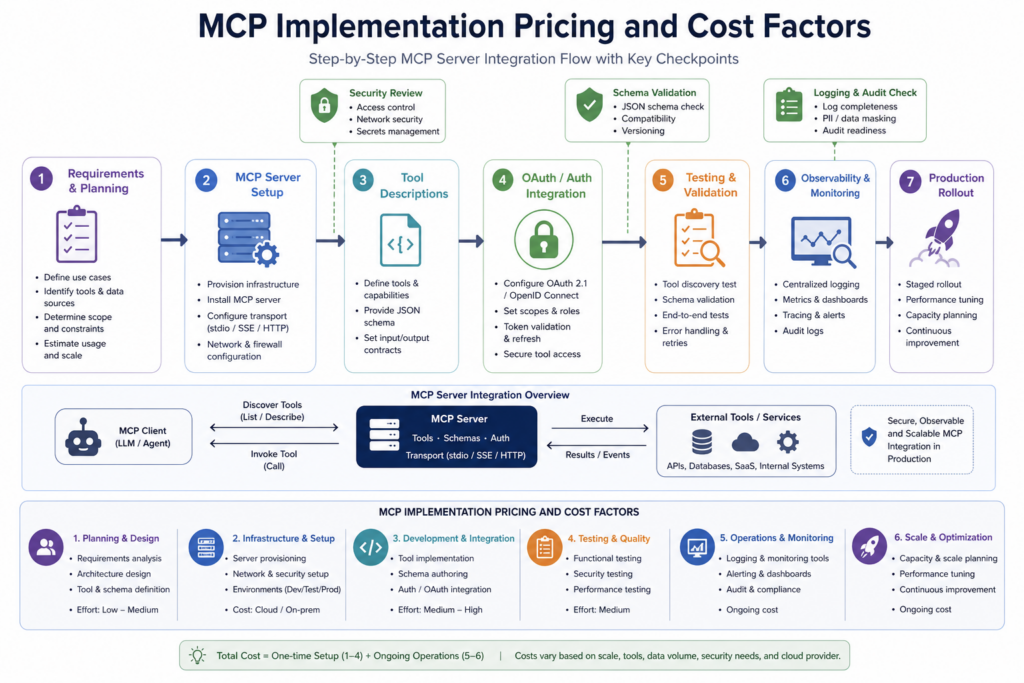

Model context protocol cost scales with three variables: number of tools, transport complexity, and auth requirements.

| Tier | Scope | Cost | Timeline |

| Tier 1: Single Server | 1 to 3 tools, stdio | $4,000 to $12,000 | 2 to 4 weeks |

| Tier 2: Multi-Tool, Auth | 5 to 15 tools, cloud, OAuth 2.1 | $18,000 to $55,000 | 6 to 12 weeks |

| Tier 3: Multi-Agent | Multiple servers, agent-to-agent | $60,000 to $200,000+ | 12 to 20 weeks |

Three areas catch teams off-guard on MCP server integration builds. OAuth 2.1 adds two to four weeks on Tier 2; it is a full auth layer, not a toggle. Tool description quality is the second: vague descriptions produce unreliable model behavior and are expensive to fix post-deployment. Third, observability infrastructure for AI agent tools connectivity in production is not optional; it is how you diagnose failures when a tool call returns unexpected results.

Tier 1 builds run fixed-scope. Tier 2 and Tier 3 model context protocol projects go time-and-materials because teams discover mid-build that APIs do not expose data in the shape that MCP protocol tools expect. Budget 20% contingency on any build above $25,000.

Teams standardizing on the model context protocol eliminate 60–80% of repeated integration effort when adding new models. This comes from building once to a shared specification instead of rewriting connectors for each provider.

Product teams using the model context protocol for AI agent tools connectivity add new tools in days, not weeks. The specification defines the interface, while the MCP server manages execution and routing.

Model context protocol improves cost scaling. Custom integrations grow linearly with each tool and model, while MCP follows a sublinear model. Protocol overhead is fixed, and each new connection adds a limited cost, creating a measurable budget advantage.

As Alex Xu explains, teams adopting open specifications retain flexibility as models evolve. AI agent tools connectivity built on model context protocol prevents repeated rebuilds and reduces long-term vendor lock-in.

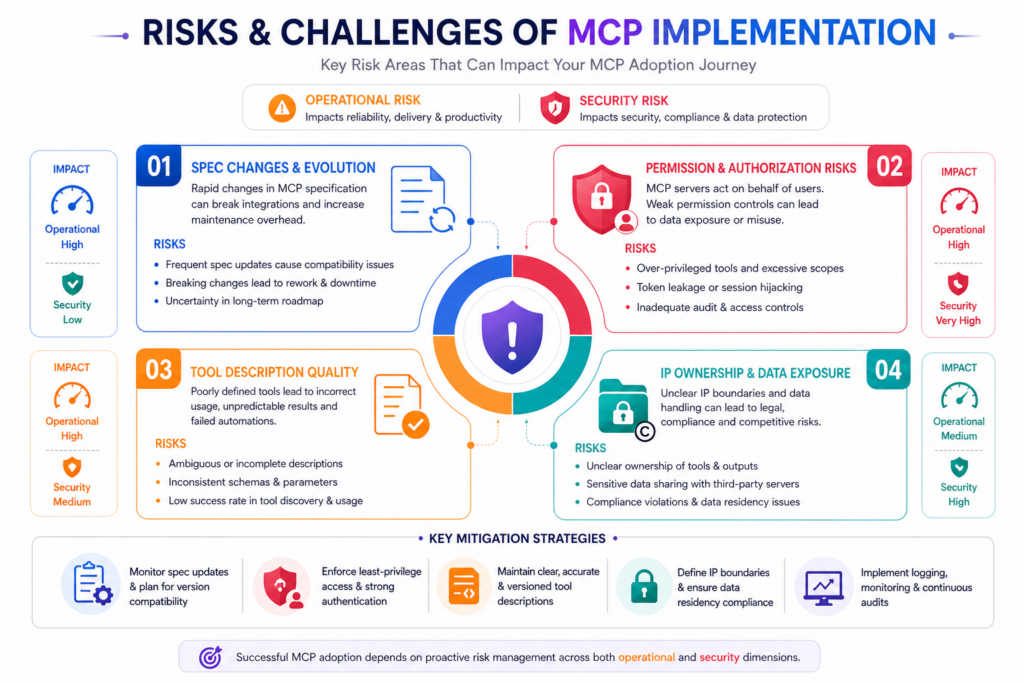

Model context protocol is production-ready, but four risks appear consistently.

Spec stability: The MCP is still evolving. The OAuth 2.1 addition in March 2025 broke builds for teams on earlier versions. Pin your version and choose a vendor who tracks spec changes proactively.

Permission overreach: Security misconfiguration is the most common failure in early AI agent tools connectivity deployments. MCP protocol tools include capability scoping, but only when configured correctly. A permissive allow-list is easy to write and hard to audit. Security review before go-live is mandatory.

Tool description quality: Model context protocol routes model behavior through tool descriptions. Vague descriptions produce inconsistent MCP protocol tools calls, a content problem that most engineering-led MCP server integration projects treat as low-priority until outputs break.

IP ownership: When you outsource model context protocol development, clarify code ownership before work starts. The vendor market is new enough that contract norms have not settled. Include provisions for early termination.

The model context protocol market is maturing. These four vendors have production track records in AI agent tools connectivity delivery.

Patoliya Infotech delivers model context protocol implementations for mid-market and enterprise clients in fintech, healthcare, and logistics.

Key capabilities:

Best for: Teams moving from prototype to production without building an internal protocol team.

Client review: 4.8/5

Leewayhertz brings AI strategy depth to MCP server integration, useful for organizations still defining architecture before committing to a build.

Key capabilities:

Best for: Enterprise teams needing strategic clarity on AI agent tools connectivity before execution.

Client review: 4.7/5

Simform integrates model context protocol into full AI product builds. The right for teams building AI-native products rather than adding agents to existing systems.

Key capabilities:

Best for: Startups building an AI-native product from scratch.

Client review: 4.6/5

Intuz delivers AI agent implementations for mobile-first and cross-platform products, with strong track records across MENA and South Asia.

Key capabilities:

Best for: Cost-sensitive teams needing reliable MCP server integration without enterprise pricing.

Client review: 4.5/5

Model context protocol delivery requires protocol-level judgment. This means knowing when stdio suffices versus when HTTP plus SSE is necessary, how to write tool descriptions that produce consistent behavior, and how to scope permissions that hold under audit.

Patoliya Infotech delivers all three as standard scope:

If your team is ready to move from prototype to a production MCP server integration, Patoliya Infotech is worth a direct conversation. Model context protocol built right the first time saves months of rework. Let's talk about what your build requires.

Model context protocol determines whether the integrations your team builds today continue to work as models evolve. Teams that get this right treat MCP server integration as long-term infrastructure. They choose partners who understand the specification and build MCP protocol tools that remain usable across model changes.

The protocol is stable, and the vendor ecosystem is maturing. If your goal is reliable, scalable AI agent tools connectivity, the model context protocol is no longer optional. It is the foundation for avoiding repeated rebuilds and protecting long-term ROI. Let’s discuss how to implement it correctly from the start.